The phrase "AI skin diagnosis" means very different things depending on who is using it and why. For a dermatologist or CRO running a decentralized efficacy study, it means quantitative, parameter-level skin assessment capable of tracking subtle biological changes over a treatment period - data that can be audited, published, and defended in a regulatory context.

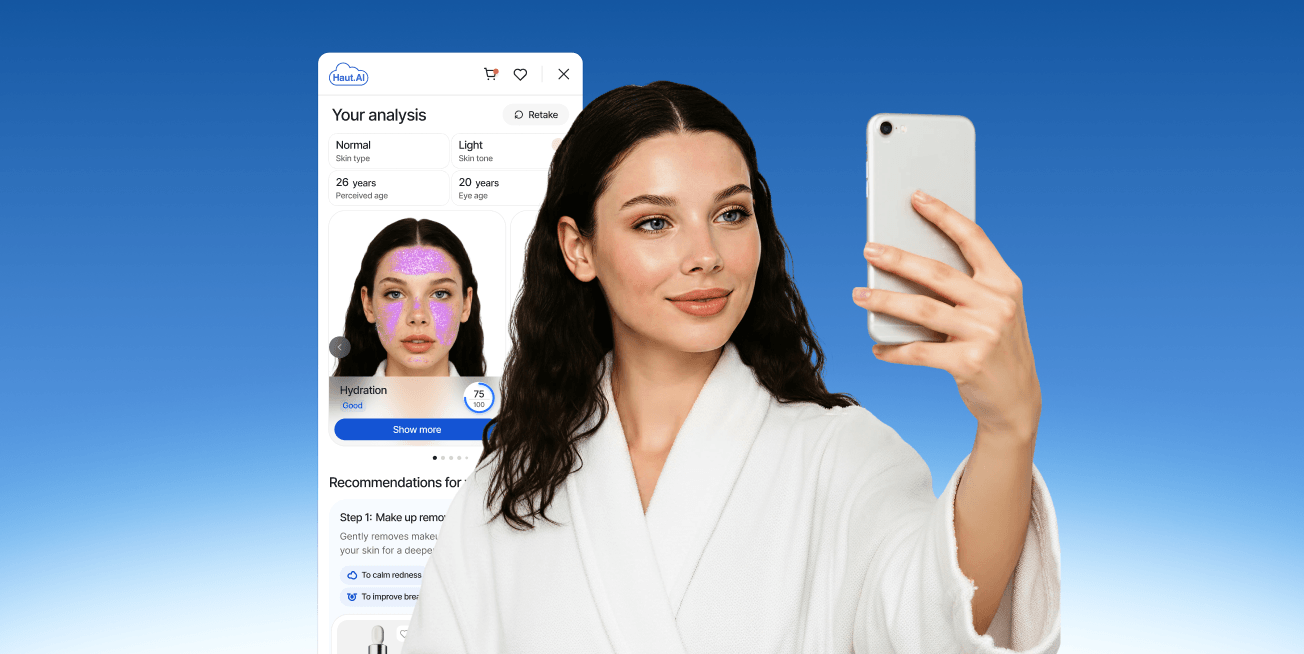

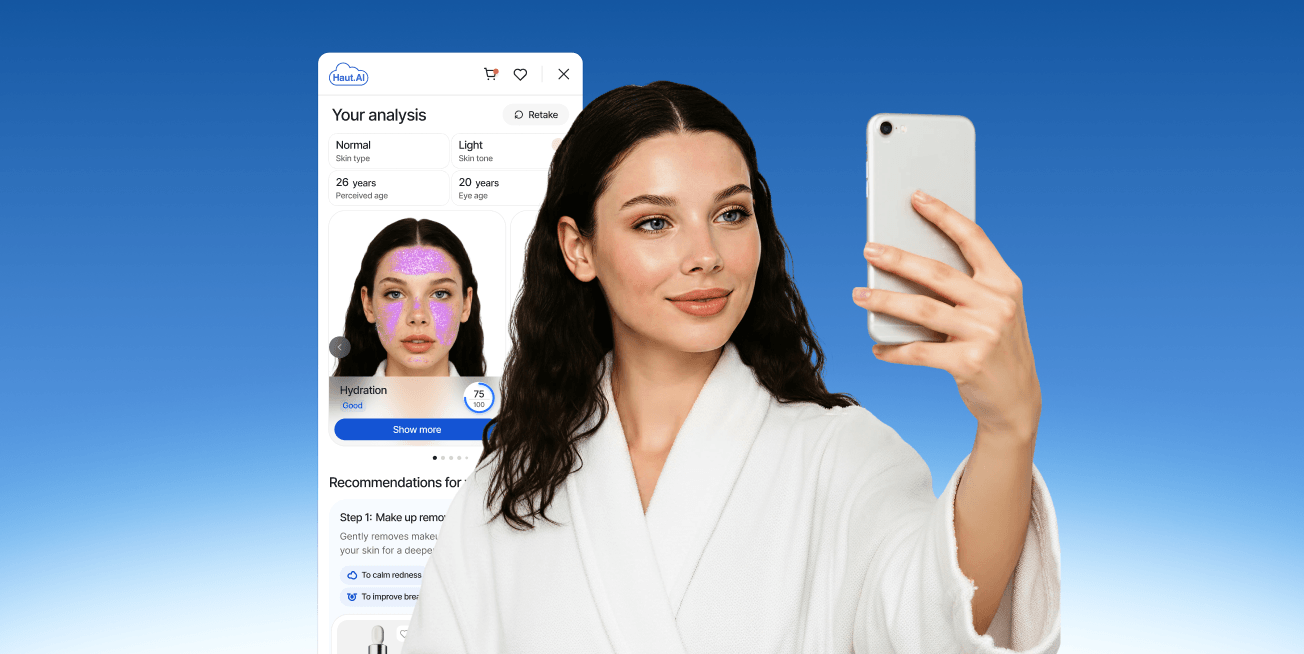

For a consumer visiting a skincare brand's website, it means something far simpler: a selfie-based analysis that identifies their skin type, flags their main concerns, and recommends products matched to their actual skin - in under ten seconds, without clinical training.

Both are real applications of AI skin analysis. Both are scientifically grounded. But they demand different outputs, different image quality standards, different regulatory considerations, and different success metrics.

This article explains how AI skin diagnosis works in each context, where the two overlap, and why the best platforms are built to serve both without compromising either.

A 2025 narrative review published in the Journal of Cosmetic Dermatology framed the problem precisely: traditional skin assessment methods "are inherently subjective, resulting in variable results, particularly in multi-center or international settings." AI addresses this by enabling "objective quantification of skin quality through high-dimensional image analysis, thereby reducing interobserver variability" (Pooth et al., 2025).

First, a critical clarification: AI skin diagnosis is not the same as medical diagnosis.

In a clinical or research context, AI skin analysis refers to the computer vision–based quantification of measurable skin parameters - redness, pigmentation distribution, pore density, wrinkle depth, acne severity, elasticity, perceived age - from standardized photographs. It does not identify diseases, prescribe treatments, or replace a dermatologist. It measures and quantifies what can be observed in an image, with a precision and consistency that human graders cannot match at scale.

In a consumer context, the same technology is applied to deliver a personalized skin profile that helps a shopper understand their skin and find products suited to it. The underlying model is identical; the output format, user interface, and use case are entirely different.

Haut.AI's Face Analysis 3.0 analyzes facial skin across 40+ parameters from a single portrait photo - running in under five seconds on 95% of images, trained on 3 million+ skin images, and validated by dermatologists across diverse skin tones, ethnicities, and age groups. That same model serves both a CRO running a 12-week efficacy study and a beauty brand running a DTC skin consultation tool for a million consumers a month.

A 2022 review in the Journal of Clinical Medicine confirmed that modern deep learning models operating on standardized image inputs can outperform specialist graders by measurable margins - one comparative analysis found that deep learning architectures exceeded specialist classification accuracy by at least 11% when input image quality was controlled (Li et al., 2022). The operative qualifier is image quality - a point we return to in detail when discussing LIQA™.

Traditional skincare efficacy studies rely on trained clinical graders who assess participants' skin against standardized scales - rating acne severity, wrinkle depth, or pigmentation uniformity on a 0–5 or 0–10 scale. This approach has well-documented limitations:

The variability problem is not theoretical. A 2022 study published in the Journal of Cosmetic Dermatology compared four widely used acne grading scales across multiple countries and found significant inter-rater disagreement across all four systems, particularly at severity boundaries - where treatment effects are most clinically meaningful (Yu et al., 2022).

For wrinkle assessment, the landmark validation of the Wrinkle Severity Rating Scale acknowledged that while trained observers can achieve acceptable intra-rater consistency, absolute grading values still differ meaningfully between raters in multi-center settings (Narins et al., 2003).

AI skin analysis addresses each of these. A consistent algorithmic model produces the same scores for the same image regardless of who is running the study, when it is run, or where participants are located.

A 2025 review in Skin Health and Disease confirmed that computer-assisted facial imaging systems provide "objective quantitative evaluation of surface and subsurface facial skin conditions" across pigmentation, inflammation, vascular features, wrinkles, pores, and texture - eliminating the single-rater bias that has historically plagued multi-center dermatology studies (Zhang et al., 2023).

The most significant shift AI enables in clinical skin research is decentralization. Participants submit photos from their own devices - guided by a standardized smart camera system - rather than travelling to a clinical site. The AI measures the same parameters at baseline, week 4, week 8, and endpoint, with the same precision at each timepoint.

The evidence for DCT effectiveness is accumulating rapidly. A 2025 critical analysis of 23 decentralized trials conducted between 2011 and 2024, published in Clinical and Translational Science, found that 11 of 13 trials reported improvements in recruitment and 7 reported positive retention outcomes compared to traditional site-based designs - with one trial (REACT-AF) documenting a 50% cost reduction while expanding data collection capabilities (Wang et al., 2025).

A 2021 analysis in Applied Clinical Trials estimated a near five-fold return on investment for DCT methods in phase II trials, rising to a 13-fold ROI in phase III.

Haut.AI's Study and Data Collection Tool (DCT) is built specifically for this workflow. It supports:

The commercial result: Haut.AI's clinical product makes every skincare efficacy study up to 3x cheaper than traditional centralized trial designs - by eliminating site management costs, reducing grader teams, and enabling automated data collection and reporting.

Clinical and R&D users require a fundamentally different output format from consumer users. Where a consumer sees a readable grade ("Pigmentation: Moderate") and a product recommendation, a clinical researcher needs:

The Face Analysis 3.0 Full Access Add-on provides all of these outputs for 15+ advanced parameters - including Acne Inflammation, Deep Lines, Fine Lines, Enlarged Pores, Freckles, Melasma, Sun Spots, Nasolabial Wrinkles, and Irritation - with additional parameters in development.

This is the data layer that powers clinical publications, claims substantiation reports, and ingredient dossiers. The same analysis that tells a consumer "you have moderate sun spots" tells a clinical team "spot area increased by 3.2% from baseline at week 4 versus the control group."

Beyond measurement, clinical teams increasingly need to visualize the changes their products produce - for internal presentations, regulatory submissions, and consumer-facing claims content.

SkinGPT High-Resolution outputs images at up to 6K×8K pixels (48MB) - print-quality, photorealistic simulations of skin change derived from a brand's own clinical trial data. The study data that generates Face Analysis 3.0 statistical outputs also feeds the SkinGPT simulation model, creating a clinically defensible visual asset that shows exactly what the product does to skin over time.

SkinGPT's simulation outputs are correlated with the VISIA clinical measurement system - the industry-standard device for quantitative skin photography in dermatological research. VISIA's own validated precision has been well established in peer-reviewed literature: a 2022 validation study published in GMS Interdisciplinary Plastic and Reconstructive Surgery found that 88.9% of measurement correlations across VISIA's eight skin parameters reached statistical significance, with the top 50% of absolute correlations exceeding r=0.945 (Henseler, 2022).

A separate reproducibility study confirmed satisfactory precision for repeated captures, with absolute scores preferred over percentile rankings for their higher consistency across sessions (Henseler, 2022b). VISIA correlation is the standard by which AI skin measurement tools establish clinical credibility - and it is the benchmark against which Face Analysis 3.0's outputs are validated.

Consumer AI skin analysis uses the same underlying model as clinical analysis, but the design constraints are fundamentally different:

The biggest technical challenge in consumer AI skin diagnosis is image variability. A clinical study controls for lighting, angle, and camera settings. A consumer on a brand's DTC website does not.

This matters more than most teams realize. A 2022 review of AI dermatology applications in the Journal of Clinical Medicine stated directly: "high-quality images are decisive for the diagnostic accuracy, sensitivity and specificity of the final trained AI algorithm" (Li et al., 2022).

A 2023 systems review in Skin Health and Disease identified the specific failure modes: uneven lighting, facial expressions, head positioning, and residual cosmetics or sunscreen all introduce artifacts that degrade measurement accuracy in ways that are invisible to the user but statistically significant in the output (Zhang et al., 2023).

Research comparing AI performance across environments confirms the pattern: diagnostic accuracy in specialist settings (AUROC ~0.90) consistently exceeds performance in uncontrolled smartphone environments (AUROC ~0.81) - a gap attributable largely to input image quality rather than model capability.

LIQA™ (Live Image Quality Assurance) is Haut.AI's answer. It is a smart camera layer that guides the consumer to capture a usable image in real time - checking lighting uniformity, face angle, distance from camera, and motion blur before the image is even taken. The consumer sees live feedback ("Move closer," "Reduce glare") rather than receiving a poor-quality analysis from a bad photo.

LIQA™ brings consumer image quality closer to clinical standards - not by requiring professional equipment, but by intelligently guiding the capture process. The same technology is used in both Haut.AI's clinical study tool and its consumer-facing widget products, which is why brands like Beiersdorf can generate data in a clinical study context and apply the same model to consumer experiences with consistent output quality.

Most AI skin analysis platforms are built for one context or the other. Consumer beauty tech companies build recommendation widgets. Clinical AI companies build research tools for dermatologists. Very few platforms were designed to do both, from the ground up, with the same underlying model.

A 2025 PMC review on AI in aesthetic and cosmetic dermatology noted that AI systems can assess "over 1000 variables" when analyzing facial images - but that the clinical and commercial value of those variables is only realized when the system is built to serve both structured research output and consumer-legible recommendations from the same analysis layer (Pooth et al., 2025). That architectural choice, made at the platform level, is what separates category-leading tools from single-use applications.

This matters for three reasons:

1. Clinical accuracy flows downstream to consumers.

When the same model that runs in a 12-week CRO study also powers a DTC widget, consumers get clinical-grade precision - not a dumbed-down approximation. The 40+ parameters, the 3 million+ training images, the dermatologist validation: all of that reaches the consumer through the same analysis engine.

The scientific literature on deep learning-based cosmetic product recommendation confirms the direction: a 2024 study in the Journal of Cosmetic Dermatology demonstrated that AI systems trained on validated dermatological image datasets can generate skin condition profiles that meaningfully improve recommendation specificity over questionnaire-based approaches (Lee et al., 2024).

2. Brands can close the loop between R&D and commerce.

Beiersdorf is the clearest example. Their R&D teams use Haut.AI's clinical tools to run efficacy studies; their commercial teams use the same platform to turn those study results into SkinGPT visual assets and consumer-facing skin consultations. The data doesn't leave the platform - it flows from clinical proof to commercial application.

3. Proprietary clinical data becomes a competitive moat.

A brand that runs an efficacy study through Haut.AI's DCT tool, generates Face Analysis 3.0 statistical outputs, and builds a SkinGPT simulation from that study data has created an asset that no competitor can replicate. The simulation is unique to that product, validated against real clinical data, and permanently owned by the brand.